[Edit April 20 2021: Chris Satterlee generously developed install packages for both OS X here and Windows here ! His full GitHub repo is here. I’m very thankful for this help (it’s not straightforward at all to do this).]

[Edit April 25 2019: Please note this is not an iPhone or Android app, and I have no plans to release it as such. You can use your phone or any other camera to take pictures of your ground coffee, but then you need to install the application on either OS X or through Python (on any operating system) to analyze the data. Download the application package here.]

Today I would like to present an OS X application I have been developing for a few months. It turns out writing Python software for coffee is a great way to relax after a day of writing Python software for astrophysics.

When I started being interested in brewing specialty coffee a few years ago, one of the first things that irritated me was our inability to recommend grind sizes for different coffee brewing methods, or to compare the quality of different grinders in an objective way. Sure, some laboratories have laser diffraction equipment that can measure the size of all particles coming out of a grinder, but rare are the coffee geeks that have access to these multi-hundred thousands of dollars kinds of equipment.

At first, I decided to take pictures of my coffee grounds spread on a white sheet, and to use an old piece of software called ImageJ, developed by the National Institutes of Health mainly to analyze microscope images, to obtain a distribution of the sizes of my coffee grounds. This worked decently well, and allowed me to start comparing different grinders. Then Scott Rao made me realize that a stand-alone application that doesn’t need a complicated installation and that is dedicated to coffee would be of interest to many people in the coffee industry. Probably just the 10% geekiest of them, but that’s cool.

I’m hoping that this application will help us understand the effects of particle size distributions on the taste of coffee. I don’t think the industry really kept us in the loop with all the laser diffraction experiments, so hopefully we can help ourselves as a community.

If you are interested in measuring the particle size distribution of your grinder, then this app is for you ‒ and it’s free. I placed it as “open source” on GitHub, so if you are a developer, you are welcome to send me suggestions in the form of push requests (the developers will know what that means).

If you would like to get started, I suggest you read this quick installation guide, which will explain how to download the app and run it even though I am not a registered Apple Developer. Then, you can choose to either read this quick summary that will get you running with the basics, or this very detailed and wordy user manual that will guide you through all the detailed options the application offers you.

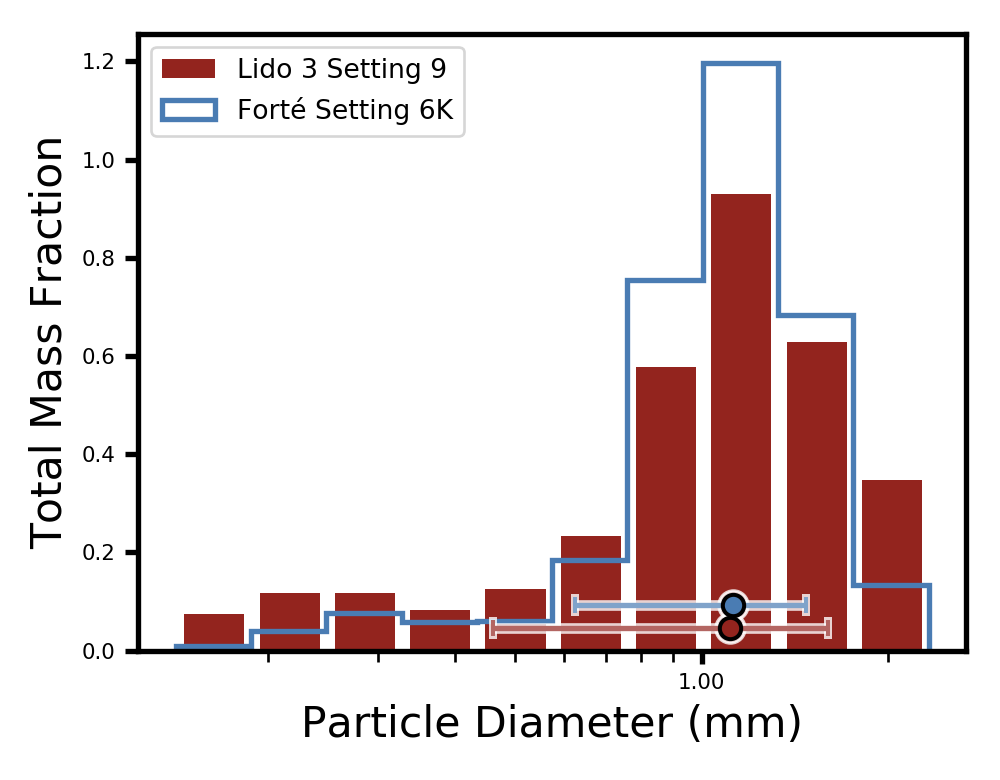

I would like to show you an example of what can be done with the software. Below, I am comparing the particle size distribution of the Baratza Forté grinder, which uses 54 mm flat steel burrs, with that of the Lido 3 hand grinder, which uses 48 mm conical steel burrs. I set both grinders in a way that produces a similar peak of average-sized particles with diameters around 1 mm, but as you can see, the particle size distributions are very different ! The Forté generates way less fines (with diameters below 0.5 mm) and slightly less boulders (with diameters of approximately 2 mm), which is indicative of a better quality grinder.

For now, the app is only intended to be used on OS X computers. But if you are running any other kind of system and know your way around Python, you can always download it directly from GitHub and run it with your own installation of Python 3.

I would like to thank Scott Rao for his excitement when I shared this project idea with him, and for beta testing the software. I would also like to thank Alex Levitt, Mitch Hale, Caleb Fischer, Francisco Quijano and Victor Malherbe for beta testing the software.

This is very, very cool. It is quite similar to the “portafilter basket hole size measuring” approach we took (to check portafilter basket hole quality).

I use a “light table” to take a photo of a basket, a reference size (basket inner diameter, usually) and then do a photographic hole size analysis. Here’s a photo of the result: https://decentespresso.com/img/decent_basket_1.jpg

John Weiss wrote it for me, and I’m happy to share the source with you. C code that runs on Linux, so should compile fine on OSX.

One tip: using a phone with an all-white image works as a very low cost light table for photographing baskets. I think that might work well as a cheap light table for photographing coffee grounds too.

– John from Decent Espresso

LikeLiked by 1 person

Thank you John ! I made an algorithm that is dedicated to being able to calculate the surface of highly non spherical particles, and at the same time separate two particles that are touching to mimick a large one. The last part is done by selecting a reference pixel (darkest one) and see if all paths from that reference toward a given region of the particle always has to pass through some bright (i.e. edge) pixels. If that’s the case then the region in question is dropped. The rest should be very similar to portafilter holes yes ! If you invert your image colors and know the pixel scale you may be able to use this software too 😛

LikeLiked by 1 person

Thank you for this excellent app Jonathan, I’m very much looking forward to comparing grind particle distributions of every grinder I get my hands on!

LikeLiked by 2 people

Hi Jonathan,

This looks very interesting. Can I ask whether results have been correlated to other means of grind analysis, can it tell us particle sizes @ +/-1stddev?

Is it yet known what the threshold is for a stddev so wide that effects on brew are significantly poorer?

Best regards, Mark.

LikeLiked by 1 person

Hi Mark, thank you. I have not correlated these results with other methods, but it would be an interesting experiment. Some things to note: 1) sieving measures the mass vs which particles can pass through a circular hole, and there’s no guarantee all particles have passed unless you shake for a very long time. My software is a direct measurement of projected surface (on the sheet) as long as the data collection is done properly (it’s explained in the full user manual). Other quantities like volume and mass have to make some assumptions for working, e.g. assuming a 3D surface to projected surface constant for all particles, or assuming that the particles lay flat so that you see their largest axis for calculating volume (these assumptions I make are all detailed in the full manual too). I’m confident that the projected 2D surface could actually be used to calibrate other methods if the software is used correctly, because you can visually inspect the particle detection with the “outline images”. This is not true of particles volume or mass.

The next version will include error bars based on small-number statistics (Poisson errors) because this is the #1 limitation of this software. It will also include the ability to merge many images to get smaller errors.

As far as scatter vs taste, this is a very complex question. Immersion will be very different than percolation for example, as flow depends on the amount of fines. This software is also not made for detecting particles smaller than one pixel, so you’d need to take macro pictures with fines in a separate sample collection if you want to go down to very small sizes, and even then an iPhone camera won’t allow you to resolve the micron-sized dust. Plus, we would need to define “significantly poorer” in a taste perception sense, which isn’t easy. I can already say that the difference between Lido 3 and Forté in a V60 cup quality is quite stark, but I’m already using subjective language.

LikeLike

Really exciting! Thank you for working on this! I’ll need to try getting this working on Windows.

LikeLike

I’ll share a how-to for Windows soon. You’ll need to launch it with Python 3 on non-OS X systems

LikeLiked by 1 person

That would be great! Thanks again!

LikeLiked by 1 person

Hi, any progress on the how-to?

I have python installed but when I run coffeegrindsize.py I get an error saying PIL module not found. I assume there’s some dependencies that need to be installed or a setup program that needs running, but I’m not sure where to find these things

LikeLike

See comment 23. April 2019.

You will find all necessary pip-commands.

Best, Stefan

LikeLike

“I made an algorithm that is dedicated to being able to calculate the surface of highly non-spherical particles, and at the same time separate two particles that are touching to mimic a large one”

Oh ah, that’s pretty fancy, and you’re right, that’s different from our basket analysis software, which measures overall light coming through each hole, and assumes circularity.

Much has been speculated about coffee particle shape and extraction efficiency.

Do you think your software could be extended to give some insight in that direction?

In particular, I’d love to have an approximate measure of *surface area* which might be calcated by looking at how round the particles are.

As it sounds like you’re almost tracing the edges of each particle, this might be possible…

-john

LikeLiked by 1 person

Oh yeah sure, the software already measures *projected* surface area. It does not assume circularity in this particular measurement because it just delimitates the non circular contour and counts the number of pixels included. The software already measures the (projected) circularity of all particles too, by calculating the ratio of the projected particle area to the area of the smallest circle that encompasses the full particle. The circularity of each particle is output in a CSV file when you choose “save data”, but currently the software doesn’t do anything else with it. It would be very easy to add an option for displaying a histogram of circularities, or even scatter plots of circularity vs surface.

LikeLike

“It would be very easy to add an option for displaying a histogram of circularities, or even scatter plots of circularity vs surface.”

Super interesting. You’re giving me ideas on how I might integrate your python code into my Decent Espresso app (also open source and cross-platform https://decentespresso.com/downloads)

For example, as Hm Digital’s refractometers are bluetooth, I’ve looked at automating data collection from each shot, into the rest of an espresso shot’s history. Particle size and shape would be also interesting.

I’ve worked with the Smart Espresso Profiler (from Hungary) to develop an open standard on “espresso shot description” and it has metadata capability, where particle size info would sit nicely. Both Decent and SEP are supporting it, and possibly some other companies too.

-john

LikeLiked by 1 person

Awesome. I’d be happy to help with it. It is requiring a lot more of the user to measure their particles on each shot, but someone wanting to experiment with this might want to do it, and being able to log it into the Decent would make sense

LikeLike

Hi Jon, let’s take this into email, nyeah? I’m john at decent espresso com.

LikeLiked by 1 person

Very interseting, thank you for your work and sharing.

I find it hard, to isolate small from big particles. When I use a cheap USB microscope, i see the fines (e.g. 50µm diameter) clinging to the lager particles (e.g. 500µm diameter). I would appreciate methods to isolate these.

0,1mm² area is about 200µm diameter, so I think that is the resolution you get in your example. But could be higher. fotographing a smaller sheet of paper.

LikeLike

Thanks Stefan. Yes, I’m aware of this phenomenon where fines stick to boulders. I’m pretty sure that for most filter-type grinds, my software will not be able to see these types of ~50 micron fines anyway, unless a crazy resolution camera is used. Otherwise they will be sub-pixel, so my software won’t be able to really tell you how many fines you have. But this will definitely be a potential problem when analyzing finer distributions like espresso, and it’s also a problem for sifting. I don’t have a solution to un-stick them right now.

LikeLike

I can imagine how using a lens for higher magnification might create all sorts of difficulties as the field of view would be reduced, and going for higher resolution imaging defeats the concept of using and easily available camera, but would you be able to count the sub-pixel fines by using the brightness of the single pixel as an indicator of size? I’m guessing you could maybe measure down to an order of magnitude smaller using brightness?

LikeLiked by 1 person

Exactly, on all points. Except fines are smaller so even if you zoom you might still get a large number of particles, you’ll just get very few larger particles. I’ve already coded in a surface vs brightness linear dependency for sub pixel particles that doesn’t enter into play with the current default threshold (58%). I want to actually test this by zooming in/out before including this by default. It looks like the smallest particles also apply more than one pixel on iPhones (maybe they’re Nyquist sampled so the smallest resolution element is ~3 pixels).

LikeLike

*occupy not apply

LikeLike

It might be of some interest to you – there is kickstarter project for fingertip sized microscope for smartphones, very small flat attachment with lightpath from the led redirectedvaround the lens. I will share more info when it comes, but I guess it might help with fines and overall sharper detail. I backed it to help me with knife sharpening analysis and checking the edge … 🙂

LikeLiked by 1 person

Sounds like you are already on to it! I think this will be a wonderful tool for anyone involved in coffee

LikeLiked by 1 person

Thanks for the insight and contribution and looking forward to learn more with the Andriod studio and the enumelator software but my question is psd is directly affected by the temperature 🤒 of the burrs ,Does this affect the sensory result for the lido and fonte

LikeLike

That’s a good question; I don’t think it is affected by burr temperature, but I could be wrong. Maybe the burrs could expand when heated and the grind size could become finer. This could definitely be tested with my software 😛

LikeLiked by 1 person

Can’t wait to play with this! Seriously excited to measure this stuff more often.

LikeLiked by 1 person

Unfortunately I use a chromebook, so I’ll just have to sit and twiddle my thumbs until the app appears on the Play store, as I know nothing of computer languages and programming. Still, this looks like the start of a great adventure.

LikeLike

Ah, sorry about that I don’t plan to go to the Play store unfortunately. If you can borrow an old PC or MacBook you should be able to make it work.

I don’t plan to go to the Play store unfortunately. If you can borrow an old PC or MacBook you should be able to make it work.

LikeLike

What a very nice piece of software for a grinder geek 🙂 Thank you very much for sharing it. Is it hard to run on PC? I have no experience with python at all, or any other coding experience, but I would really like to use it as it would help me so much in my handgrinder collection analysis.

One idea about estimation of the 3d shape – maybe if there were multiple point light sources (leds?) around the area in circular pattern and fixed height from the surface … the projected shadows could be used to get better idea about the 3d shape. Not sure if its worth the complexity, but if a better knowledge of the 3d shape was important than maybe …

LikeLike

Hey Pavel, thanks for your comment. Haha yes I thought about the multiple cameras but it will be extremely hard to collect data properly and write a software to analyze it, for what I think is a small improvement. Maybe at some point I’ll try it. For now, see the long user manual it explains how the 3D surface is estimated.

It is not hard to run it on PC, you just need a few more installation steps. Here’s a quick summary:

Install Python 3 (you can find the Windows installer on Google), and *make sure to check the box that says “Add Python to PATH” while installing*. Open a command prompt and enter the following commands, one at a time and separately:

pip install pillow

pip install numpy

pip install pandas

pip install matplotlib

Download the coffeegrindsize package as a zip on GitHub. Unzip the package. Locate the file “coffeegrindsize.py” file in it and open it with Python.

LikeLiked by 2 people

Thank you very much – sounds really nice and easy. Regarding the 3d estimation … multiple cameras would be probably quite hard (though you could maybe use so,e photogrammetry tools like alice vision to register the images and extract 3d pointcloud data) thats why I though multiple light sources of constant distance from center, regular angles apart and fixed height from surface …. it might me then possible to separately detect the particles and their corespomdimg shadows from those lights and extract more information about the shape …

If the lights are used in sequence … givíng s sequence of images, another tools can be used to get some estimation of surface normals of the particle, if there are enough pixels for that …. giving some information about the “bumpiness” of the surface shpt by camera … Not saying that its important to know that 🙂 But I guess it might add precision to surface area estimation?

I was thinking about this kind of analysis done in realtime .. if you imagine a grinder with a small camera taking pictures as it grinds and giving back information about the peak of particle size in realtime so the grinder could be able to self adjust – not sure if this type of analysis can be done fast enough though. It was just one idea I had when I tried to find a way how a grinder could be able to actually know precisely what a particle size it ís set to … it seemed to be the hardest part to get a realtime data about – the reality of the grind setting 🙂

LikeLike

Yes, having many cameras would allow to truly resolve the 3D shape, a bit like some 3D scanners do, but this would be even harder. Doing real time data analysis would be possible, but then it becomes very hard to separate clumps of particles.

LikeLike

Hi Jonathan,

just got Python (3.7.3) installed – thanks for the pip tips above.

Any advice on what works best for spreading grinds? I’m also battling chaf which is a major pain in the butt to get rid of.

Regards,

Tom

LikeLike

Oh yeah chaff is a real pain. I tear up the tip of a Q-tip and use it to grab the chaff. For spreading grinds, I find it best to sprinkle with two fingers from a foot or two above the sheet.

LikeLike

I am trying to open this program but am getting this error now:

File “C:\coffeegrindsize-master\coffeegrindsize.py”, line 4, in

from PIL import ImageTk, Image

File “C:\coffeegrindsize-master\PIL\__init__.py”, line 42

raise AttributeError(f”module ‘{__name__}’ has no attribute ‘{name}'”)

^

SyntaxError: invalid syntax

Help?

LikeLike

It seems like you have not installed the pillow package. Here’s a short recap of how to install the app properly on Windows.

Install Python 3 (you can find the Windows installer on Google), and *make sure to check the box that says “Add Python to PATH” while installing*. Open a command prompt and enter the following commands, one at a time and separately: pip install pillow, pip install numpy, pip install pandas, pip install matplotlib. Download the coffeegrindsize package as a zip on GitHub. Unzip the package. Locate the file “coffeegrindsize.py” file in it and open it with Python.

If this still doesn’t work, try also “pip install Pillow” with a capitalized P

LikeLike

Very nice. One practical piece of this info could help with is to know when exactly change grinder burrs as you track grind size distribution change over time. Other possible grinder maintenance as well, like motors or misalignments when compared to a target measurement from a new grinder. Good job, this is cool.

LikeLike

Thanks ! I agree !

LikeLike

I would like to ask if I may about the processing of the image – I went through the manual, but when you talk about thresholding, its not clear to me if you do some processing of the image before its thresholded, or if you just take the image as is. I have a feeling there is lot that can be done to help the algorithm through some preprocessing (I do image processing and manipulation for living 🙂 so … I see a lot of the stuff that I do for other purposes that can be applied here. For example the unevenness of lighting can be very much removed, even the shadows could dealth with. Also .. when using iphone … one can still shoot raw, which would be way better both for preprocessing. If you might send me some pictures I could test and send you back some flattened versions that could be thresholded much more agressively to detect the smaller particles.

I have to wait with the instalation as my home computer battery bloated few days ago and I am waiting for the repairs … but once I have it running I can try using some iphone macro lens and preprocessing and stitching of multiple close up images together to get one with way more resolution – it will probably take lot more time but still I guess way less time then when I sifted through all the 12 sieves on Kruve 😀 (and I did … but a big problem there is that a lot of coffee is lost during the process … so much that I could not take the results seriously … I think I was missing around 0,7 of a gram from 10g sample ).

Oh … and for my composite from multiple pictures idea – do you compensate for the difference in distance/size in the center vs edge of the image ? If you do so then it would not be really any good to stitch one large image from multiple shots.

LikeLiked by 1 person

I don’t do distortions or image treatments for now – it would be easy to also flat field by taking a first image without the particles. For now I limited thresholding also because I want to test the surface vs brightness of sub pix particles, which would allow to measures smaller particles with a threshold < 58%. If you know Python packages to help remove shadows or distortions in a very general way, I’m interested. I’m less interested in writing one from scratch. I already do composites of 12 pictures for my posts but that’s with 12 samples just to get better statistics. Feel free to have a look at the GitHub directory for the exact algorithm to separate particles, I just made up something that is relatively fast and seems to work well (it also tried to ignore particles too close to one another).

LikeLike

Ok, so stitching is safe as it would not interfere with anything. Regarding python tools, I am not a coder, so cant help there … but it does not have to be done inside your app, there is enough tools for manipulating pixels around, even free ones, any many of the techniques are easy to transfer to other sw. Flatening the lighting through picture without coffee is the simplest, but not very practical as the picture would need zo be guaranteed exactly the same. I guess it would be easier to do one a bit more agressive pass on detection/thresholding that still selects only the grounds, expanding the mask by a few pixels, deleting whats supposed to be the coffee particles, filling the holes with colours from surrounding pixels, and some blur … that should be enough to flatten the lighting and push it to white … if you wanted to do it inside your tool. But i think it could be better to just manipulate the pixels outside and have the tool to focus only on detection and measuring.

LikeLike

Thanks for the suggestions. I will soon add an option to collate CSV files, so stitching images won’t be required. This will be much faster.

LikeLike

The tools sounds amazing! I’d love to start using it. However, when i download it to my mac I dont get the .app only the .py . Quite strange and I don’t know how to solve it. Thanks!

LikeLike

Ah, try looking in /dist/ and its subdirectories. I should probably add a main level shortcut !

LikeLike

Amazing! Works perfectly now. Thanks for the quick help !

LikeLiked by 1 person

Hi Jonathan,

Thank you for the software, excellent work! I have it running on Win10 with Anaconda 3.6 python. I am planning to have a deeper look at the algorithm, very interested in the details.

I’ve been thinking about using a blast of compressed air to spread the coffee over the paper, as curently I am getting too many clusters. Will report back if this works well.

LikeLiked by 1 person

Hey Andrew, thank you. A blast of air could help, but it might be easier to just sprinkle the coffee with 2 fingers from higher up above the sheet. I’ve been able to get decent results by doing this, and spreading a small amount per second, and move around to cover all of the sheet. You can use your second hand in a sprinkling motion below the other hand at ~half the height to interrupt the flow of coffee and spread it even more.

LikeLike

It would be interesting if there was a way to export an image shot in Portrait mode on an iPhone that has dual cameras. They clearly have depth information to support their ability to adjust the pseudo depth-of-focus, it would be interesting to leverage that somehow!

LikeLike

That’s interesting. As far as I know, the iPhone uses this 3D information just to simulate a camera focal length and then just saves that as a 2D image. If the two individual images can be saved though, it’s possible it could help provide 3D info, but to really get the full shape of coffee particles I think we’d need a few more angles. The problem will likely not be data collection because you could just move a regular iphone a bit around and take a few pictures. The much harder part will be the data analysis to align images and re-construct 3D models.

LikeLike

I was just googling and it appears as though the depth map is embedded in the .heic image file. I’m not seeing any information on how someone might get to that data directly without developer access of some kind. I found this write up the most informative because it speaks to how Photoshop can use the .heic file’s depth map…meaning it’s theoretically possible at least! https://macprovideo.com/article/photoshop/depth-maps-in-photoshop-cc-2018

All that said, I would agree this won’t be an ideal 3D image to work with, but it certainly solves the alignment issue since that’s done by default in this method.

LikeLiked by 1 person

Hi Mike. I can have a look at it, I should be able to get the depth data out. But I dont think it will be of any use for this tast, as I dont think there is much quality and depth resolution (if the data has enough resolution to separate subject foreground and background, it still probably does not have enough resolution for sub milimeter particles :))

LikeLiked by 1 person

Yes that would definitely be a worry. The depth would need to be extremely precise to be useful. And from some photos I have seen the matching doesn’t always seem perfect to the order of millimeters.

LikeLike

I have been trying to sprinkle the coffee above a paper sheet, but this did not work for me. I guess the reason is that I am interested in espresso grind, so the particles are small and form clusters easily. Seems that rubbing the coffee between fingers makes even more clusters. Have done any good measurements of espresso grinds?

I have uploaded a couple of pictures on my Github https://github.com/AndyZap/CoffeeParticles/tree/master/Images/2019_may_11

The scale object on the pictures is a metal ruler with 1 mm markings.

As you see, I have a few “stand-alone” particles, and a lot of clusters. The clusters looks very interesting: this is a kind of light-brown “thingy” and the coffee particles are embedded in it. Any Idea what is this? The beans are medium roast Kenya Karumandi. This does not look like chaff. The problem with these clusters is that they are too big and skew the measurements. And there are too many to remove manually… Have not tried my compressed air idea yet.

LikeLike

Thanks for the comment Andrew. It’s definitely possible that measuring espresso grind with cameras will be challenging because of clumps. I haven’t tried yet, I’ll let you know if I find a way to get better measurements when I do.

LikeLike

Hello,

fantastic posts! I found my way to this site via James Hoffmann. Now, it is possible that my Mac (mid 2012 OS10.13.6 (17G7024)) is already too old to runt the app? I get the message »[…]is not supported on this type of Mac«. Hoping there is a way to run it anyway.

Best regards from Germany!

LikeLike

Oh that’s weird ! I ran an early version on a friend’s 2008 Macbook and it worked. Could you send me a screenshot of the error through the contact form ?

LikeLike

I know nothing about python, but could this be transformed into a website with uploaded pictures?

LikeLike

Yes but it would require a web server dedicated to run it and store images, which I don’t have. Barista Hustle made a simpler version with a Java web app.

LikeLike

Jonathan, this just became my new favorite blog! I was searching around for solutions for measuring grind size, and found your app. In the past I have used a set of kruve sifters, and right now I’m 3d printing my own set of shaker sieves to work with the kruve screens and a vibration table. It’s not perfect, but I hope it will give me a reasonable way to compare my grinders in a more efficient manner.

Getting a sufficient distribution of coffee grounds seems to be a challenge for using your software. So far, I have had the best luck using vibration to distribute grounds with minimum clustering. I am no scientist, but it does have me wondering if grounds distribution could be improved with a Chladni plate?

LikeLike

Thanks ! Yes, sample preparation is the hardest part of imaging ! Sifting should allow you to get interesting results, but it also comes with its own challenges. Having an automated shaker will definitely make it less of a hassle. I don’t think a Chladni plate would really help imaging, because it would tend to concentrate particles along the nodes of the vibration patterns on the plate.

LikeLike

Hi Jonathan, I’m a mechanical engineer master student and coffee enthusiast from Brazil, I just discovered your blog (from James Hofmmann video, loads to thank him as well) and I have one word for it: GENIUS! All the science and methodology behind the posts you make are awesome. But this piece of software is simply amazing… Btw, I did not use it yet, so I can’t give any specific compliments, though I’m very excited about it. I’ll keep browsing now, thanks for all the insights and information!!

LikeLike

It would be better if it was a website where I could upload my picture and get a result. I don’t want to install software and what if I don’t have a Mac…

If it’s okay with the author, he or someone should make a web service. I could do it but it will take me a while as I have other priorities.

LikeLike

im so exciting to use this software but i use windows 10 can somone help me how to install the app using python .

LikeLike

Follow these steps on Windows:

Install Python 3 (you can find the Windows installer on Google), and *make sure to check the box that says “Add Python to PATH” while installing*. Open a command prompt and enter the following commands, one at a time and separately: pip install pillow, pip install numpy, pip install pandas, pip install matplotlib. Download the coffeegrindsize package as a zip on GitHub. Unzip the package. Locate the file “coffeegrindsize.py” file in it and open it with Python.

LikeLike

For separating the grains, maybe it would help to use sticky paper and to shake the paper a bit until the grains each stuck separately.

LikeLike

That’s not a bad idea, but they may also get stuck in clusters as soon as you spray them on

LikeLike

Hi Jonathan

I am also getting something similar to Zachary. Unfortunately I am a novice with Python.

I am running Ubuntu 20.04 Linux and have Python3 and 3.8 installed.

I run the pip’s and get when running the code:

$ python3 ./coffeegrindsize.py

Traceback (most recent call last):

File “./coffeegrindsize.py”, line 4, in

from PIL import ImageTk, Image

ImportError: cannot import name ‘ImageTk’ from ‘PIL’ (/usr/lib/python3/dist-packages/PIL/__init__.py)

Tried to pip3 (I think pip3 is pip for python3 in linux) again Pillow with upper case initial but it does not seem case sensitive.

Do you have any suggestions?

Many thanks in advance!

LikeLike

I found out: I installed python3-pil.imagetk and it started. Now let’s see if it works 🙂

LikeLike

Oh ! Interesting, I didn’t need that version of PIL. I’m glad that worked out.

LikeLike

Hi Jonathan,

great site and description of you app! Found it through James Hoffmann too.

Wanted to install it on my mac, but the installation always crashes before it really begins. Might it be an issue with Big Sur?

Cheers and thanks for your help

Nik

LikeLike

I’m having the same issue – were you able to find a solution?

LikeLike

Unfortunately not till now…

LikeLike

Hi Nik. Try upgrading to the latest Big Sur. It works for me on 11.2.3. I never had 11.1, though, so I can’t say for sure.

LikeLike

Hi there. Just wanted to say Thanks! This is a lot of fun. I have been using this quite a bit recently to assess different grinders and setting. Impressive GUI and seems to work reasonably well if care is taken in preparing the sample and taking a decent shot. All the best! – Josh

LikeLike

I think this program doesn’t work in version 11.1 of macOS. 😥

LikeLike

Hi Sinan. Try upgrading to the latest Big Sur. It works for me on 11.2.3. I never had 11.1, though, so I can’t say for sure.

LikeLike

Also having trouble running after unzipping and telling OS to allow it to run. On latest MacOS (11.1). It gives a log readout but I don’t want to gum up your comments section with the whole thing. I’m not sure which part is relevant.

LikeLike

Hi Andrew. Try upgrading to the latest Big Sur. It works for me on 11.2.3. I never had 11.1, though, so I can’t say for sure.

LikeLike

Hi everybody,

After a few days of hair pulling,I still cannot manage to run this application, on both old school Windows vista or Lubuntu

I’m not even sure my Python install is correct or compatible with my OS

Is there by any chance someone who could share a detailed tutorial for a noob like me

Thanks

LikeLike

You can try this; if it doesn’t work, feel free to comment below and I can try to help more. My Windows memory is rusty however: Install Python 3 (you can find the Windows installer on Google), and *make sure to check the box that says “Add Python to PATH” while installing*. Open a command prompt and enter the following commands, one at a time and separately: pip install pillow, pip install numpy, pip install pandas, pip install matplotlib. Download the coffeegrindsize package as a zip on GitHub. Unzip the package. Locate the file “coffeegrindsize.py” file in it and open it with Python.

LikeLike

Hello everyone,

I built an MSI installer that makes it possible to run this app on Windows without installing Python. I also built a Mac installer (DMG) that might be a bit easier to use and will run on older MacOs versions (it also has an icon!). I don’t know if the Mac version will run on 11.1, so it would be great if someone could try that.

Windows might complain about the security of installing the app, but I think you should be able to override its objections if you are persistent (and willing to take the risk 🙂

https://github.com/csatt/coffeegrindsize/releases/tag/v1.0.0

– Chris Satterlee

LikeLike

Thank you so much ! I looked this up a while ago and never took the time to fully reach the end of that particular rabbit hole.

LikeLike

I hope it helps more people use this great tool! You know, you’d have more time for things like this if you gave up that astrophysics distraction of yours and focused on the important stuff…

LikeLike

haha, very funny 😉

LikeLike

I upgraded my MacBook Pro to Big Sur (11.2.3). The installer worked fine and the app seems to run OK (not much testing). Notably, Jonathan’s app download ALSO works for me on 11.2.3. So the problems that people had with 11.1 might be fixed by upgrading Big Sur to the latest. It’s hard for me to say because I skipped over 11.1 (I upgraded from Mojave).

NOTE: Big Sur seems to have one more layer of resistance to installing “unidentified developer” apps. The first time you do the Control-Click and Open, there are only two choices: Move to Trash and Cancel. Choose Cancel and then Control-Click Open again. Now there will be an Open button.

LikeLike

I added a DMG for a version that will run native on Apple Silicon Macs running Monterey (12.x) or higher. Since this app is pretty computationally intensive, it is noticeably faster than running the Intel version via Rosetta. Particle detection for one example I tried completes in 16 seconds versus 29 seconds for the Intel/Rosetta version.

LikeLike