In this post, I present a new tool to create custom brew water recipes, which can be built from any soft water rather than distilled water.

An In-Depth Analysis of Coffee Filters

In this longer than usual post, I do a very detailed microscope analysis of various V60, chemex and siphon paper and cloth filters.

How Coffee Varietals and Processing Affect Taste

In this post, I used tasting notes from 1500 bags of coffee too bring out how perceived flavor is affected by coffee varietal and processing

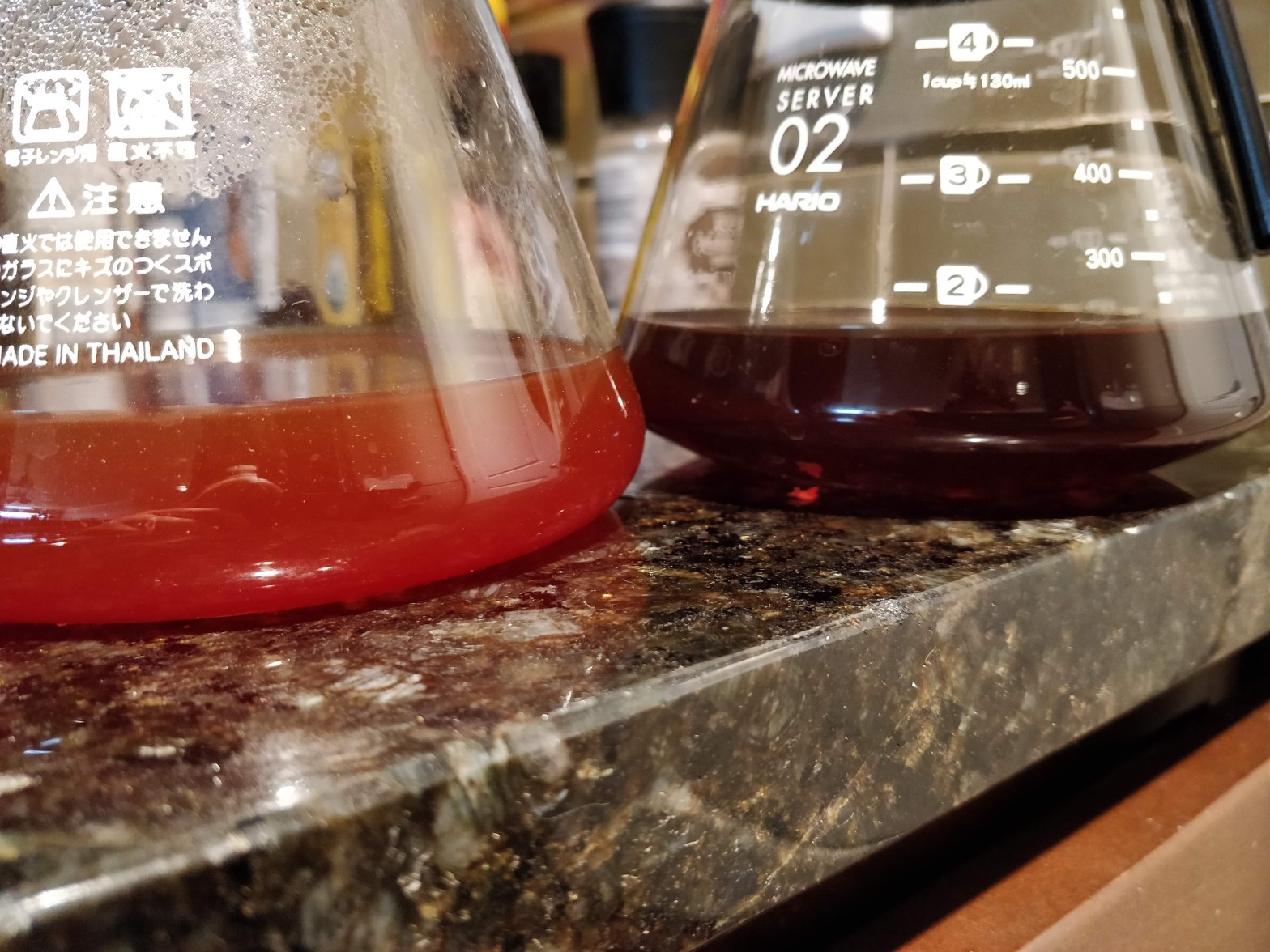

Why do Percolation and Immersion Coffee Taste so Different ?

In this post, I discuss the physics of extraction by percolation and immersion and how they lead to very different taste profiles.

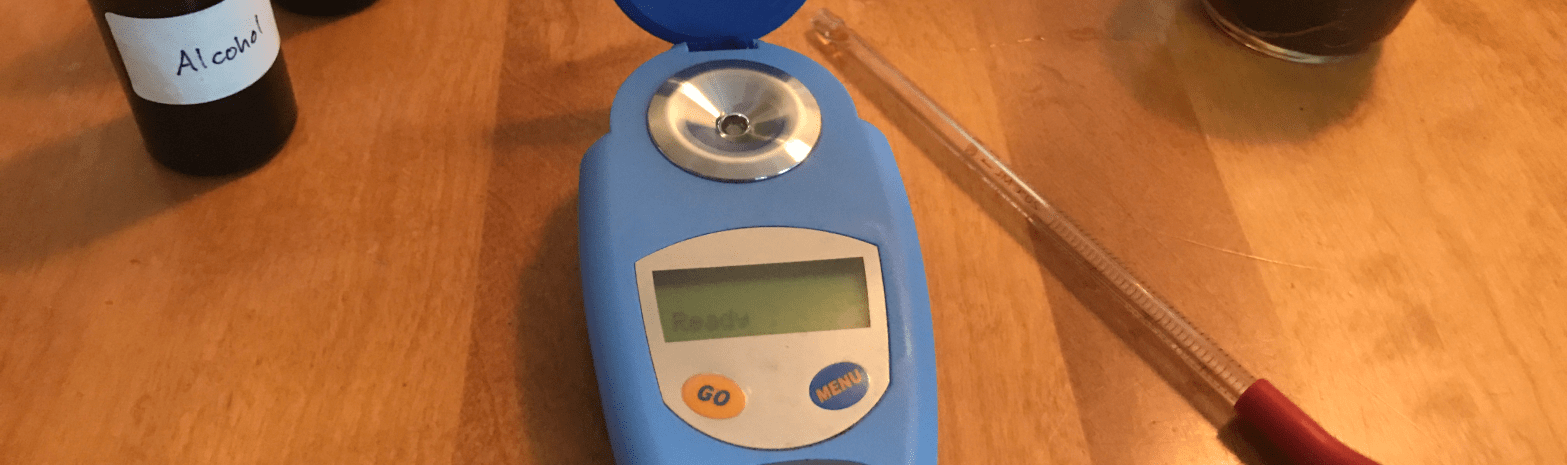

The Repeatability of Manual V60 Pour Overs

Today I decided to measure how repeatable and consistent my manual V60 pour overs are. My expectations were very low, given how variable an average extraction yield I often get when I brew the same coffee a few days apart. To do this, I used some older coffee I had left from a local roasterContinue reading "The Repeatability of Manual V60 Pour Overs"

A V60 Pour Over Video

Today I decided to release publicly one of the V60 videos from my Patreon. I plan to make a better quality video eventually for my blog, but in the meantime I thought this would be interesting to a wider audience. Please view this recent post I made about what is going on with Patreon ifContinue reading "A V60 Pour Over Video"

About Patreon

I realize I haven't talked a lot about my Patreon page on this blog yet, so I thought I'd update you all about it in a short blog post. I might remove it later, if it becomes irrelevant, and because I am trying to make this blog a repository of useful resources rather than updatesContinue reading "About Patreon"

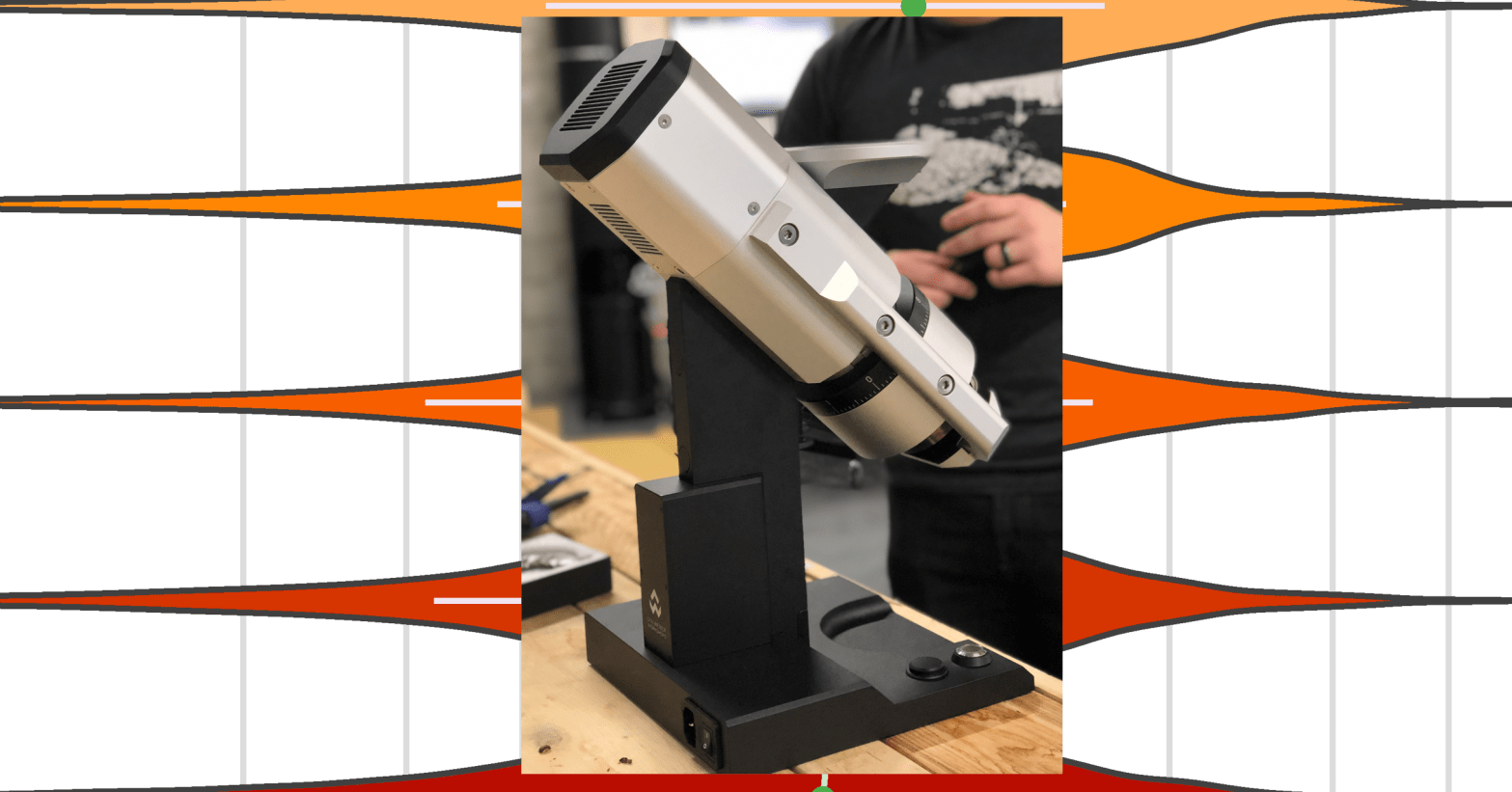

Seasoning Grinder Burrs and Grind Quality

This post is a detailed discussion of the effects of coffee grinder seasoning on particle size distributions.

Grind Quality and the Popcorning Effect

I often heard worries in the coffee community about a difference of quality in the coffee grind size distribution when grinding with a full hopper versus a single dose of coffee in an otherwise empty hopper. The idea behind this is that coffee beans forced through the rotating grinder burrs have no choice but toContinue reading "Grind Quality and the Popcorning Effect"

An App to Measure your Coffee Grind Size Distribution

[Edit April 20 2021: Chris Satterlee generously developed install packages for both OS X here and Windows here ! His full GitHub repo is here. I'm very thankful for this help (it's not straightforward at all to do this).] [Edit April 25 2019: Please note this is not an iPhone or Android app, and IContinue reading "An App to Measure your Coffee Grind Size Distribution"